Core Web Vitals Optimization: A Practical Guide

A hands-on guide to optimizing Core Web Vitals (LCP, INP, CLS). Covers measurement, diagnosis, and specific fixes with before/after examples from real projects.

Core Web Vitals directly impact search ranking and user experience. After optimizing several production applications, here’s my practical playbook for hitting good scores on all three metrics.

WEB VITALS IN ONE SCREEN

The fastest way to improve Core Web Vitals is to treat each metric as a different kind of failure mode. They do not respond to the same fixes.

LCP

Fix what delays the largest visible element

Largest Contentful Paint is usually an image, hero block, or large text section arriving too late.

- Preload the real hero asset

- Reduce server response time

- Avoid oversized unresponsive media

INP

Remove long tasks from interaction paths

Interaction to Next Paint is mainly about keeping the main thread free enough to respond when users click or type.

- Break synchronous work into chunks

- Defer non-urgent updates

- Debounce search-heavy interactions

CLS

Reserve space before content arrives

Cumulative Layout Shift punishes surprise movement. The fix is usually explicit dimensions and stable placeholders.

- Set media dimensions

- Reserve async content slots

- Avoid injecting banners above existing content

MEASUREMENT

Use field data before declaring victory

Lab scores are useful for diagnosis, but real-user telemetry is what tells you whether the experience is actually improving.

- Collect `web-vitals` data

- Track regressions over time

- Validate on realistic devices and networks

The Three Metrics

| Metric | Measures | Good | Needs Work | Poor |

|---|---|---|---|---|

| LCP (Largest Contentful Paint) | Loading | < 2.5s | 2.5-4.0s | > 4.0s |

| INP (Interaction to Next Paint) | Interactivity | < 200ms | 200-500ms | > 500ms |

| CLS (Cumulative Layout Shift) | Visual stability | < 0.1 | 0.1-0.25 | > 0.25 |

Measuring Before Optimizing

Always measure in the field, not just in lab conditions.

// web-vitals library

import { onLCP, onINP, onCLS } from 'web-vitals';

function sendToAnalytics(metric) {

const body = JSON.stringify({

name: metric.name,

value: metric.value,

delta: metric.delta,

id: metric.id,

navigationType: metric.navigationType,

});

navigator.sendBeacon('/api/analytics', body);

}

onLCP(sendToAnalytics);

onINP(sendToAnalytics);

onCLS(sendToAnalytics);Optimizing LCP

LCP measures when the largest content element becomes visible. It’s usually a hero image, heading, or text block.

1. Preload the LCP Image

<!-- In <head> — tell the browser about the hero image early -->

<link rel="preload" as="image" href="/hero-image.webp" fetchpriority="high" />2. Use Responsive Images

<img

src="/hero-800.webp"

srcset="/hero-400.webp 400w, /hero-800.webp 800w, /hero-1200.webp 1200w"

sizes="(max-width: 768px) 100vw, 800px"

alt="Hero image"

width="800"

height="400"

fetchpriority="high"

decoding="async"

/>3. Optimize Server Response Time

// SvelteKit example: cache expensive data

export const load: PageServerLoad = async ({ setHeaders }) => {

setHeaders({

'Cache-Control': 'public, max-age=3600, s-maxage=86400',

});

const data = await fetchExpensiveData();

return { data };

};4. Inline Critical CSS

For SvelteKit, CSS is automatically inlined during SSR. For other frameworks, use tools like critters:

// vite.config.ts

import critters from 'critters-webpack-plugin';

// This inlines above-the-fold CSS and defers the restOptimizing INP

INP (Interaction to Next Paint) replaced FID in 2024. It measures the responsiveness of all interactions, not just the first one.

1. Break Up Long Tasks

// Before: one long synchronous operation

function processLargeDataset(items) {

items.forEach(item => heavyTransform(item)); // Blocks for 300ms

}

// After: yield to the main thread

async function processLargeDataset(items) {

const chunks = chunkArray(items, 50);

for (const chunk of chunks) {

chunk.forEach(item => heavyTransform(item));

await scheduler.yield(); // Let the browser handle pending interactions

}

}2. Use startTransition for Non-Urgent Updates (React)

import { startTransition } from 'react';

function SearchComponent() {

const [query, setQuery] = useState('');

const [results, setResults] = useState([]);

function handleChange(e) {

setQuery(e.target.value); // Urgent: update input immediately

startTransition(() => {

setResults(filterResults(e.target.value)); // Non-urgent: can be deferred

});

}

}3. Debounce Event Handlers

function debounce<T extends (...args: any[]) => void>(fn: T, ms: number): T {

let timer: ReturnType<typeof setTimeout>;

return ((...args: Parameters<T>) => {

clearTimeout(timer);

timer = setTimeout(() => fn(...args), ms);

}) as T;

}

// Usage

input.addEventListener('input', debounce(handleSearch, 200));Optimizing CLS

CLS measures unexpected layout shifts. It’s the most frustrating metric for users.

1. Always Set Image Dimensions

<!-- Bad: causes layout shift when image loads -->

<img src="/photo.webp" alt="Photo" />

<!-- Good: browser reserves space -->

<img src="/photo.webp" alt="Photo" width="800" height="600" />2. Use CSS aspect-ratio for Dynamic Content

.video-container {

aspect-ratio: 16 / 9;

width: 100%;

background: #1a1a1a;

}3. Reserve Space for Async Content

/* Reserve space for an ad slot or dynamic banner */

.ad-slot {

min-height: 250px;

contain: layout;

}4. Avoid Inserting Content Above Existing Content

This is the most common CLS offender. Cookie banners, notification bars, and lazy-loaded headers all push content down.

/* Pin dynamic banners to the top of the viewport */

.notification-bar {

position: fixed;

top: 0;

left: 0;

right: 0;

z-index: 50;

}Real Results

On this portfolio site, after applying these optimizations:

| Metric | Before | After |

|---|---|---|

| LCP | 3.2s | 1.4s |

| INP | 180ms | 45ms |

| CLS | 0.12 | 0.01 |

| Lighthouse Score | 78 | 98 |

The biggest wins came from image optimization (LCP), removing synchronous third-party scripts (INP), and setting explicit dimensions on all media (CLS).

START WITH THE BIGGEST LEVERS

Most Web Vitals work is not a giant rewrite. It is a sequence of targeted fixes that remove very specific bottlenecks.

HIGH-LEVERAGE FIXES

These changes usually move the metrics fastest

- Preload and right-size your true LCP asset

- Remove or defer blocking third-party scripts

- Break up long interaction handlers and heavy transforms

- Set explicit dimensions or aspect ratios on all media and embeds

COMMON WASTE

These patterns slow teams down without solving much

- Chasing Lighthouse points without field measurement

- Optimizing tiny components while the hero image is still oversized

- Ignoring CLS until banners and ads start shifting the page

- Testing only on fast laptops and office Wi-Fi

Key Takeaways

- Measure in the field using the

web-vitalslibrary, not just Lighthouse - LCP: preload hero images and optimize server response time

- INP: break long tasks, debounce handlers, use

startTransition - CLS: always set image dimensions and reserve space for dynamic content

- Small, targeted fixes often deliver the biggest improvements

- Test on real devices — your development machine isn’t representative

Written by Umesh Malik

AI Engineer & Software Developer. Building GenAI applications, LLM-powered products, and scalable systems.

Related Articles

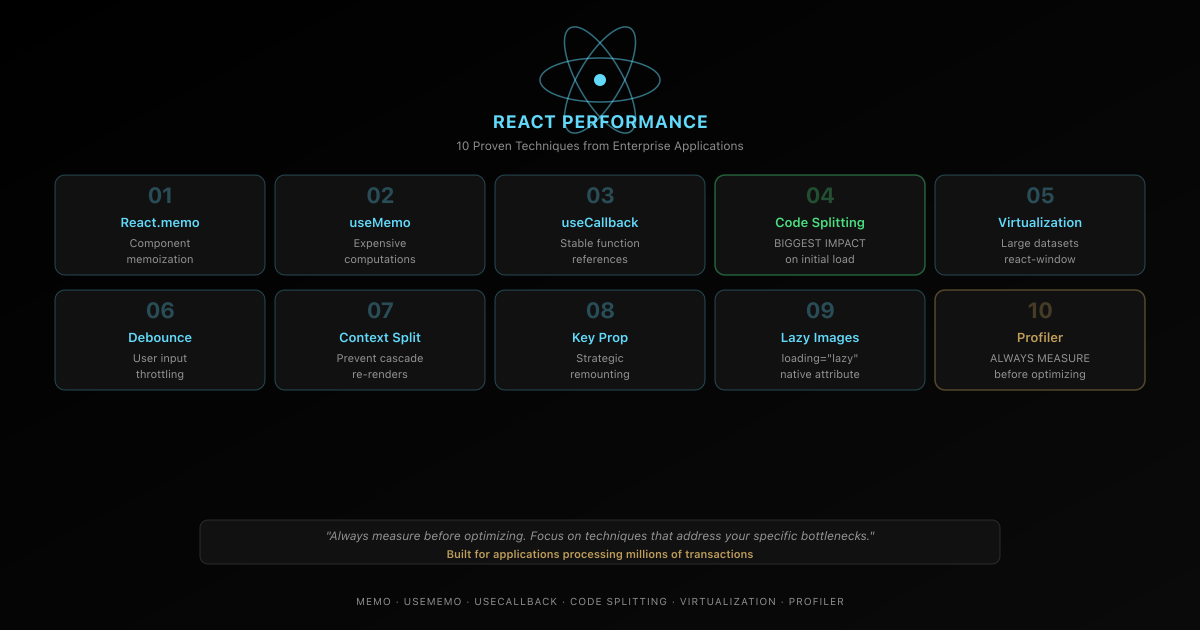

React

React Performance Optimization: 10 Proven Techniques

Learn 10 battle-tested React performance optimization techniques including memoization, code splitting, virtualization, and more from real enterprise applications.

AI & Developer Experience

The $1,100 Framework That Just Made Vercel's $3 Billion Moat Obsolete

One engineer + Claude AI rebuilt Next.js in 7 days for $1,100. The result: 4.4x faster builds, 57% smaller bundles, already powering CIO.gov in production. This is the moment AI-built infrastructure became real—and everything about software development just changed.

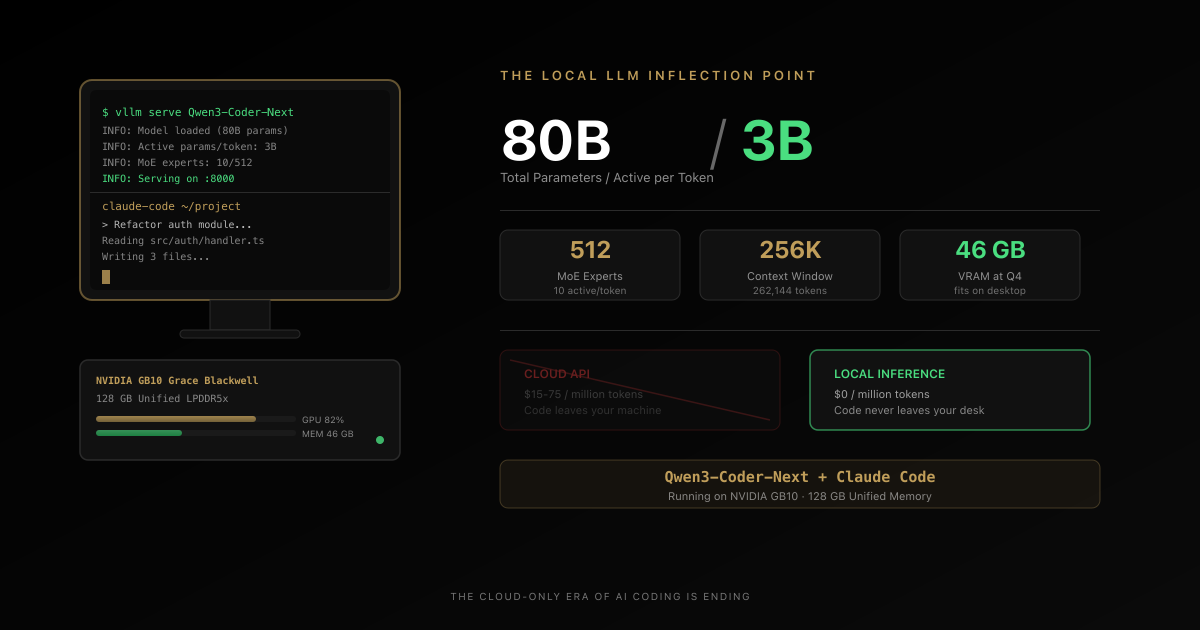

AI & Developer Experience

The Local LLM Coding Revolution Just Started — 80B Parameters on Your Desktop, 3B Active, Zero Cloud Bills

A tech journalist just declared he finally found a local LLM he wants to use for real coding work. Qwen3-Coder-Next runs 80 billion parameters on a desktop, activates only 3 billion per token, and plugs directly into Claude Code. The cloud-only era of AI coding is ending. Here is the full technical breakdown, the privacy argument nobody is making, and why this changes the economics of AI-assisted development.