DeepSeek V4 Is About to Test America’s AI Lead: What We Know Before Launch

DeepSeek V4 is expected in early March 2026. Here is what is confirmed, what remains unverified, and how it challenges U.S. AI rivals.

If DeepSeek ships V4 in the first week of March 2026, this won’t be just another model update. It will be a geopolitical product launch disguised as a technical release.

The short answer is simple: DeepSeek appears to be using V4 to pressure two fronts at once. First, it pressures U.S. model labs on cost and openness. Second, it pressures U.S. chip leadership by prioritizing Chinese hardware partners before Nvidia and AMD.

As of March 1, 2026, V4 is still expected rather than fully published. But we already have enough verified signals to understand the strategy and where the next battle in AI is heading.

If you searched for DeepSeek V4 release, DeepSeek vs U.S. AI rivals, or DeepSeek new AI model 2026, this is the evidence-first breakdown you need before making product or infrastructure bets.

TL;DR

- DeepSeek is expected to launch V4 in early March 2026, more than a year after R1 became a global flashpoint.

- Reuters-reported sourcing says DeepSeek gave optimization lead time to Huawei and other Chinese suppliers, while U.S. chipmakers were left out before launch.

- DeepSeek’s own public changelog shows no V4 release entry yet as of March 1, 2026, which means most hard specs are still unconfirmed.

- This launch matters less as a benchmark race and more as a stack-control race: model, chips, developer distribution, and political timing.

- The biggest mistake in current coverage is treating this as “just DeepSeek vs OpenAI.” It is really China AI ecosystem vs U.S. AI ecosystem.

EVIDENCE BOUNDARIES

This story only makes sense if you keep the line clean between what is confirmed, what is reported, and what is still speculation.

CONFIRMED

Signals that are public enough to trust

There is enough verified reporting and official-doc absence to analyze the strategy without pretending the full release is already public.

- Reuters-linked reporting on a V4 launch window

- Reported China-first supplier optimization

- No official V4 changelog entry as of March 1, 2026

- Anthropic’s public distillation allegations

NOT YET PUBLIC

Specs teams should not hallucinate

This is where coverage often gets sloppy and turns expectations into facts.

- Final architecture details

- Benchmark reproducibility

- Verifiable training hardware breakdown

- Final licensing and checkpoint policy

WHY IT MATTERS

The real competition is ecosystem-level

This release matters because it pressures model labs, chip vendors, and cloud ecosystems at the same time.

- Cost pressure on U.S. labs

- Sovereign inference demand

- China-native stack acceleration

TEAM RESPONSE

Use this as a planning signal, not a migration trigger

The right move is to prepare evaluation paths now without treating rumors as production readiness.

- Build contingency eval suites

- Prepare dual-vendor architecture

- Wait for real docs before high-stakes bets

DeepSeek V4 Release: What Is Actually Confirmed Right Now?

Here is the clean separation between confirmed facts and speculation:

Confirmed (as of March 1, 2026)

- Reuters reporting on February 25, 2026 said DeepSeek was preparing a major V4 update and had given domestic suppliers like Huawei early access for optimization.

- Reuters-linked reporting on February 28, 2026 said DeepSeek planned a broader V4 launch in the following week with multimodal capabilities.

- DeepSeek’s official API changelog currently lists major updates through DeepSeek-V3.2 (December 1, 2025), with no public V4 release note yet.

- Anthropic publicly alleged “industrial-scale distillation attacks” involving DeepSeek, Moonshot, and MiniMax in a February 24, 2026 statement.

Not Yet Publicly Confirmed

- Final V4 architecture details (parameters, active experts, long-context limits).

- Full benchmark suite and reproducible eval methodology.

- Official training hardware breakdown and verifiable chip provenance.

- Final licensing and release cadence for open checkpoints.

That distinction matters. Good strategy analysis starts with clean evidence boundaries.

| Claim | Status on March 1, 2026 | Evidence Level | What To Do With It |

|---|---|---|---|

| V4 launch in early March | Expected | Medium (Reuters-sourced reporting) | Track daily; plan contingencies |

| Multimodal capability | Expected | Medium | Prepare eval suites for multimodal tasks |

| Pre-launch domestic chip optimization | Reported | Medium | Assume stronger China-native deployment readiness |

| Official V4 model card/changelog | Not yet public | High (official docs absent) | Avoid hard architecture assumptions |

| Full benchmark reproducibility | Not yet public | High (no public eval package) | Do not migrate production on hype |

Why This Launch Is a Bigger Deal Than Another Model Benchmark

Most AI coverage still defaults to “Which model scores higher?” That’s yesterday’s lens.

V4 matters because DeepSeek is executing a platform leverage play:

- Ship a strong model with aggressive cost/performance positioning.

- Make it easier for Chinese chip and cloud players to run it first-class.

- Expand ecosystem gravity around non-U.S. infrastructure.

If this works, DeepSeek doesn’t need to “beat GPT on every benchmark.” It just needs to become the default open model path across large parts of Asia and cost-sensitive enterprise workloads.

That is enough to shift market power.

DeepSeek V4 vs DeepSeek V3.2: What Likely Changes

Most teams compare DeepSeek to GPT/Claude but skip the more useful lens: what changes from the previous DeepSeek generation.

| Area | DeepSeek V3.2 (Publicly Documented) | DeepSeek V4 (Expected) | Why It Matters for Teams |

|---|---|---|---|

| Public release signal | Documented in official changelog | Not yet in official changelog (as of Mar 1, 2026) | Release readiness remains uncertain |

| Positioning | Strong open-model value narrative | Flagship reset and geopolitical signaling | More executive attention and procurement pressure |

| Modality scope | Strong text-centric production usage | Reported multimodal expansion | New attack surface, new product options |

| Hardware messaging | Mixed public understanding | Reported China-first optimization emphasis | Impacts infra vendor strategy |

| Ecosystem impact | Developer momentum | Potential stack realignment catalyst | Can reshape model portfolio decisions |

The Timeline That Explains the V4 Moment

January 2025: DeepSeek became impossible to ignore

DeepSeek’s rise in early 2025 triggered a market shock narrative around lower-cost Chinese models, including app-store momentum and a broader repricing of AI infrastructure assumptions.

2025: Rapid model iteration without a V4 flagship reset

DeepSeek continued shipping updates (R1-0528, V3.1, V3.2 variants), but a full next-generation flagship line did not appear in public API release notes.

February 2026: Two signals converged

Signal one: Reuters-linked reporting said DeepSeek withheld pre-release optimization access from U.S. chipmakers while giving Chinese partners a head start.

Signal two: Anthropic’s public accusations intensified the U.S.-China AI trust conflict around model distillation and capability transfer.

Early March 2026: Expected V4 window

The expected launch window aligns with a politically visible period in China and comes at a moment when export controls, chip policy, and open-model competition are converging.

This is not accidental timing.

DeepSeek V4 vs U.S. Rivals: The Real Competitive Frame

The wrong question: “Is V4 smarter than GPT or Claude?”

The right question: “Can V4 anchor a viable China-first AI stack at scale?”

| Dimension | DeepSeek V4 (Expected) | U.S. Frontier Labs (Current Pattern) |

|---|---|---|

| Distribution model | Likely open/partially open ecosystem approach | Primarily API-controlled commercial access |

| Hardware alignment | China-native supplier optimization emphasized pre-launch | Primarily Nvidia-centric software + cloud deployment |

| Policy pressure | Operates under export-control constraints and domestic substitution goals | Operates with stronger access to leading-edge chips, but higher political scrutiny abroad |

| Speed vs transparency | Fast launches, limited pre-release transparency | Stronger model cards/safety docs in some cases, slower to open weights |

| Strategic objective | Ecosystem independence and inference sovereignty | Global platform dominance and enterprise lock-in |

This table is why V4 matters. It is less about one leaderboard and more about where developer gravity settles over the next 18 months.

Where U.S. Rivals Are Most Exposed

1) Cost narrative fragility

If DeepSeek keeps delivering near-frontier capability with aggressive pricing, U.S. labs face margin pressure even when they remain technically ahead.

2) Inference localization pressure

Countries and enterprises that want local control over AI infrastructure will keep evaluating open or semi-open alternatives. DeepSeek can capture that demand even without owning the top benchmark crown.

3) Chip-software co-optimization race

If Chinese chipmakers can reliably run top-tier models with good developer ergonomics, Nvidia lock-in weakens at the edge. That is a long game, but it starts with releases like V4.

Where DeepSeek Can Win Fast vs Where It Can Lose Fast

| Scenario | Likely Outcome for DeepSeek | Likely Outcome for U.S. Rivals |

|---|---|---|

| V4 ships on time with credible multimodal quality | Accelerated adoption in price-sensitive and sovereign markets | Stronger pressure to cut pricing and expand model access options |

| V4 launch slips or underdelivers | Narrative damage and reduced enterprise trust | Temporary relief, but open-model pressure persists |

| Documentation and evals are strong | Improved enterprise procurement confidence | Harder to dismiss DeepSeek as “only a cost play” |

| Governance/safety concerns dominate discourse | Adoption ceilings outside aligned markets | U.S. providers gain trust advantage in regulated sectors |

HOW TO READ THE V4 MOMENT

The bull case and the constraint case can both be true at the same time. Serious teams should evaluate both.

BULL CASE

Why V4 could matter strategically even without winning every benchmark

DeepSeek does not need universal frontier leadership to reshape the market. It needs enough capability, enough cost pressure, and enough ecosystem pull.

- Open or semi-open distribution can attract sovereign markets

- China-first optimization can strengthen alternative chip stacks

- A strong enough release can reprice inference expectations globally

CONSTRAINT CASE

Why execution quality still determines whether this becomes a real shift

Narrative momentum is not enough. Enterprise trust and operational maturity still decide production adoption.

- Weak docs or unverifiable evals can cap procurement confidence

- Governance concerns can limit international adoption

- A late or underwhelming launch would quickly damage the thesis

Where DeepSeek Still Has to Prove Itself

This is the part enthusiasts skip and serious builders should not.

1) Reproducible quality under real workloads

Synthetic benchmark screenshots are cheap. Real production reliability is hard.

2) Safety and policy governance

Capability without strong abuse controls becomes a trust ceiling, especially in global enterprise procurement.

3) Documentation depth

Deep technical notes, eval reproducibility, and deployment guidance determine whether developers actually stay in your ecosystem.

4) Global regulatory acceptance

Even a strong model can hit adoption ceilings if governance concerns block procurement in key markets.

What Product and Infra Teams Should Measure in Week 1 of V4

Do not ask “is it better?” Ask if it is production-viable for your exact workload.

| Metric | Why It Matters | Target Check |

|---|---|---|

| Task success rate | Real user outcome quality | Must beat or match current baseline |

| Cost per successful task | True efficiency signal | Must improve blended unit economics |

| Median and p95 latency | UX and orchestration stability | Must remain inside SLOs at load |

| Tool-call reliability | Agent/workflow confidence | Low retry rate under realistic traffic |

| Safety refusal precision | Compliance and abuse control | Blocks harmful prompts without over-blocking valid ones |

| Context handling stability | Long-session reliability | No steep quality collapse with long prompts |

Practical Recommendations for Engineering Leaders

If you’re running an AI product roadmap in 2026, do this now:

- Run a two-track model strategy. Keep one U.S. frontier API path and one open-model fallback path.

- Benchmark for your workload, not Twitter hype. Evaluate latency, cost per task, tool-call reliability, and failure modes.

- Treat chip dependency as a risk surface. Vendor concentration is now a board-level issue, not just an infra detail.

- Plan for model substitution. Your architecture should swap providers without product outages.

- Add policy observability. Monitor legal and compliance shifts like you monitor p95 latency.

- Use evaluation gates before rollout. No model reaches production without passing pre-defined quality, safety, and cost thresholds.

- Separate model from product logic. Keep prompt orchestration and business rules provider-agnostic.

- Instrument failure analytics deeply. Capture refusal drift, hallucination classes, and tool-calling errors over time.

The teams that win this cycle will be the ones that are architecturally adaptable, not ideologically loyal to one vendor.

LEADERSHIP PLAYBOOK

This launch should push teams toward architectural flexibility, not toward reactive vendor loyalty.

ARCHITECTURE

Design for provider substitution

Do not let one model vendor become embedded in core product logic.

- Separate model adapters from business rules

- Keep prompts and orchestration portable

EVALUATION

Benchmark your own workload

Public hype is less useful than measuring success rate, unit economics, and failure modes on real tasks.

- Shadow traffic

- Task success rate

- Safety and tool reliability

RISK

Treat chip dependency as strategic exposure

Model choice and hardware dependency are now linked risks, not separate decisions.

- Track vendor concentration

- Watch policy shifts like infra incidents

Common Mistakes in DeepSeek V4 Coverage

Mistake 1: Treating one launch rumor as settled fact

A reported launch window is not a released model card. Keep a strict line between what is expected and what is shipped.

Mistake 2: Reducing the story to benchmark screenshots

Even strong benchmark gains are not enough without deployment maturity, governance confidence, and operational support.

Mistake 3: Ignoring hardware and policy constraints

Model quality is only one layer. Chip availability, export controls, and compliance constraints decide real adoption speed.

Mistake 4: Assuming one-vendor strategies are still safe

In 2026, single-provider model strategy is a concentration risk. Multi-model architecture is now the practical default.

FAQ

Is DeepSeek V4 officially released as of March 1, 2026?

No public DeepSeek API changelog entry confirms a V4 release yet as of March 1, 2026. Current reporting points to an expected launch window in early March.

Why are people framing this as a challenge to U.S. rivals?

Because the challenge is not only model quality. It combines model performance, pricing pressure, and a deliberate shift toward Chinese chip and cloud alignment.

Is this only about China vs the United States?

No. It also affects any region pursuing AI sovereignty, lower inference costs, or reduced dependence on a single vendor stack.

Does this mean Nvidia is no longer central to AI?

No. Nvidia remains dominant globally. The key issue is whether more inference demand can gradually shift to alternative stacks in constrained or sovereign environments.

Are Anthropic’s distillation allegations proven in court?

No. Anthropic has made public allegations and described technical detection methods, but legal outcomes are separate from public claims.

Should product teams switch from U.S. models to DeepSeek immediately?

Not blindly. The right move is a measured dual-vendor strategy, workload-based benchmarking, and strict governance checks before production migration.

What is the best rollout strategy if V4 launches this week?

Use a staged approach: sandbox evals, shadow traffic, limited production cohort, then broader rollout only after KPI and safety gates pass.

Final Take

“DeepSeek to release long-awaited AI model in new challenge to US rivals” is a good headline, but an incomplete thesis.

The deeper story is this: AI competition is no longer model-vs-model. It is ecosystem-vs-ecosystem. V4 is a test of whether China can scale a full-stack alternative under export pressure, while U.S. labs defend performance, trust, and platform control.

If you lead AI products, don’t watch this launch as a spectator event. Use it as a forcing function to harden your architecture, diversify your model strategy, and stop assuming one ecosystem will stay dominant forever.

If you want to go deeper on this shift, start with my breakdown of the distillation dispute and what it means for model security and policy next.

Sources

- Reuters: Exclusive - DeepSeek withholds latest AI model from U.S. chipmakers including Nvidia (Feb 25, 2026)

- Reuters (syndicated): DeepSeek expected to unveil V4 and challenge U.S. rivals (Feb 28, 2026)

- DeepSeek API Docs: Official Change Log (accessed Mar 1, 2026)

- Anthropic: Detecting and preventing distillation attacks (Feb 24, 2026)

- TechCrunch: DeepSeek displaces ChatGPT as the App Store’s top app (Jan 27, 2025)

- Reuters coverage via India Today: DeepSeek plans wider V4 release in challenge to U.S. rivals (Feb 28, 2026)

Related Reading

Written by Umesh Malik

AI Engineer & Software Developer. Building GenAI applications, LLM-powered products, and scalable systems.

Related Articles

AI & Enterprise

Nvidia's OpenClaw Strategy: Why Jensen Huang Says Every Company Needs an AI Agent Plan

At GTC 2026, Jensen Huang said every company needs an OpenClaw strategy. Here is what it means and what U.S. teams should do next.

AI & LLMs

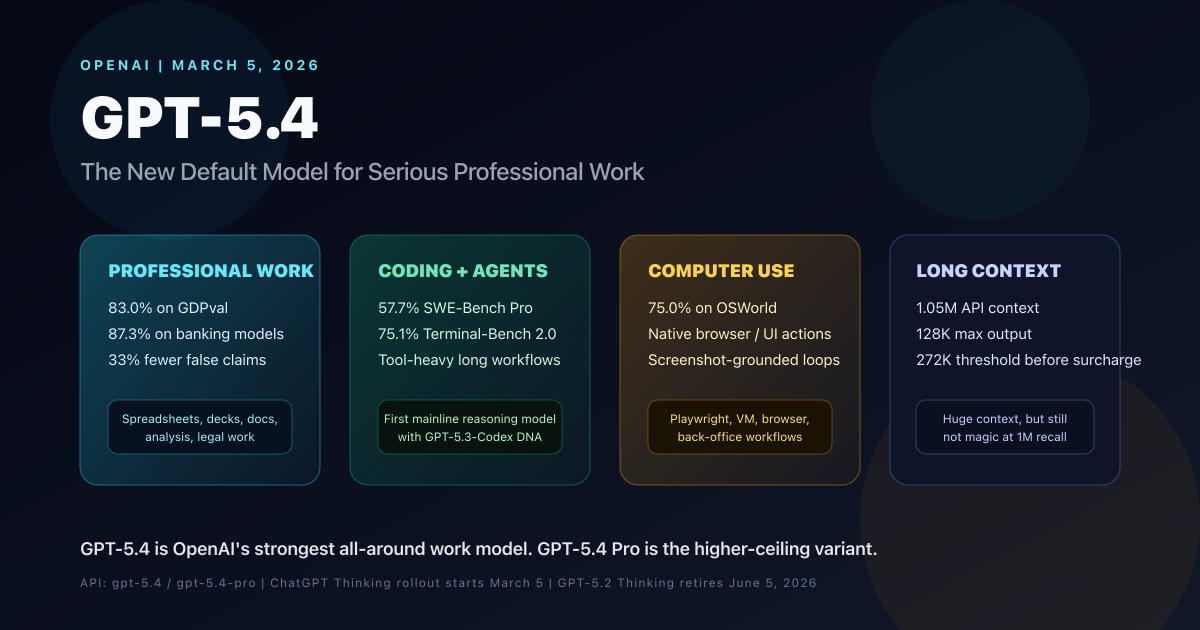

OpenAI GPT-5.4 Complete Guide: Benchmarks, Use Cases, Pricing, API, and GPT-5.4 Pro Comparison

OpenAI GPT-5.4 is the new mainline reasoning model for professional work. This complete guide covers benchmarks, use cases, pricing, API details, long-context behavior, computer use, tool search, GPT-5.4 Pro, and how it compares with GPT-5.2 and GPT-5.3-Codex.

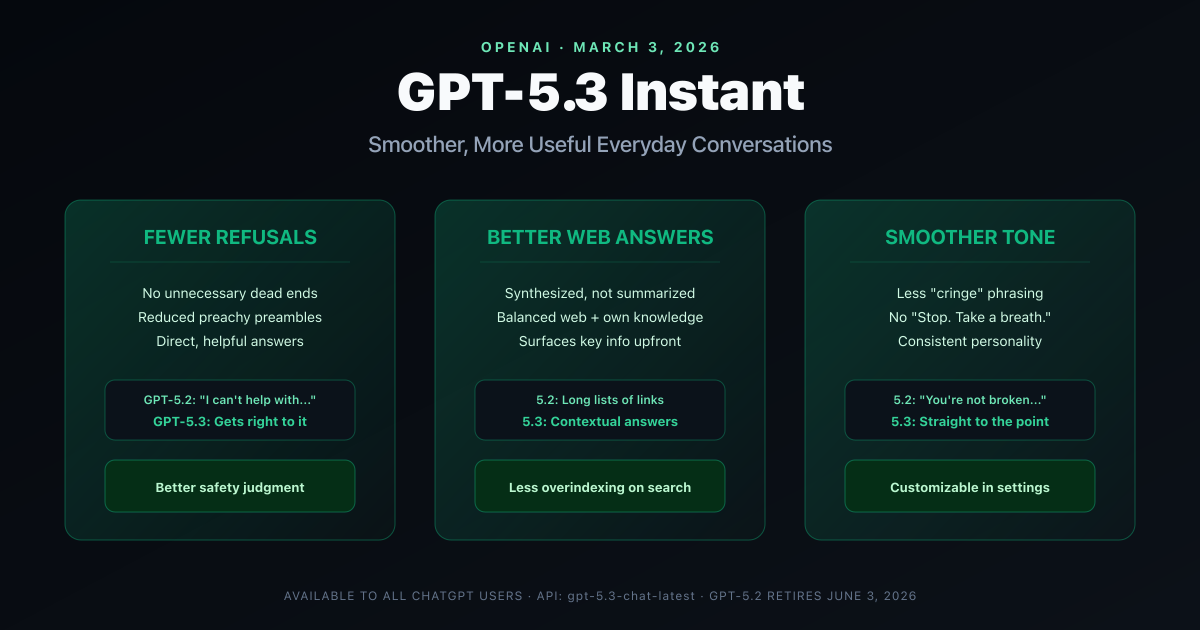

AI & LLMs

OpenAI GPT-5.3 Instant: Fewer Refusals, Better Web Answers, and a Smoother ChatGPT

OpenAI releases GPT-5.3 Instant with 26.8% fewer hallucinations, reduced unnecessary refusals, better web-sourced answers, and a smoother conversational tone. Full breakdown of what changed, why it matters, and what developers need to know.